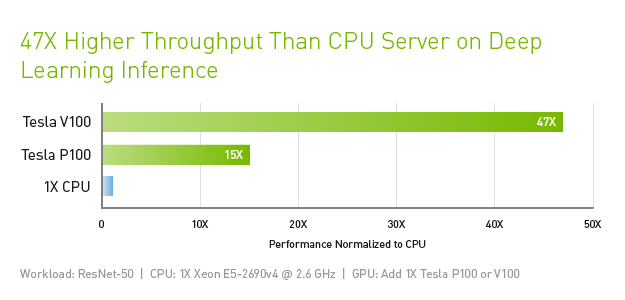

How Amazon Search achieves low-latency, high-throughput T5 inference with NVIDIA Triton on AWS | AWS Machine Learning Blog

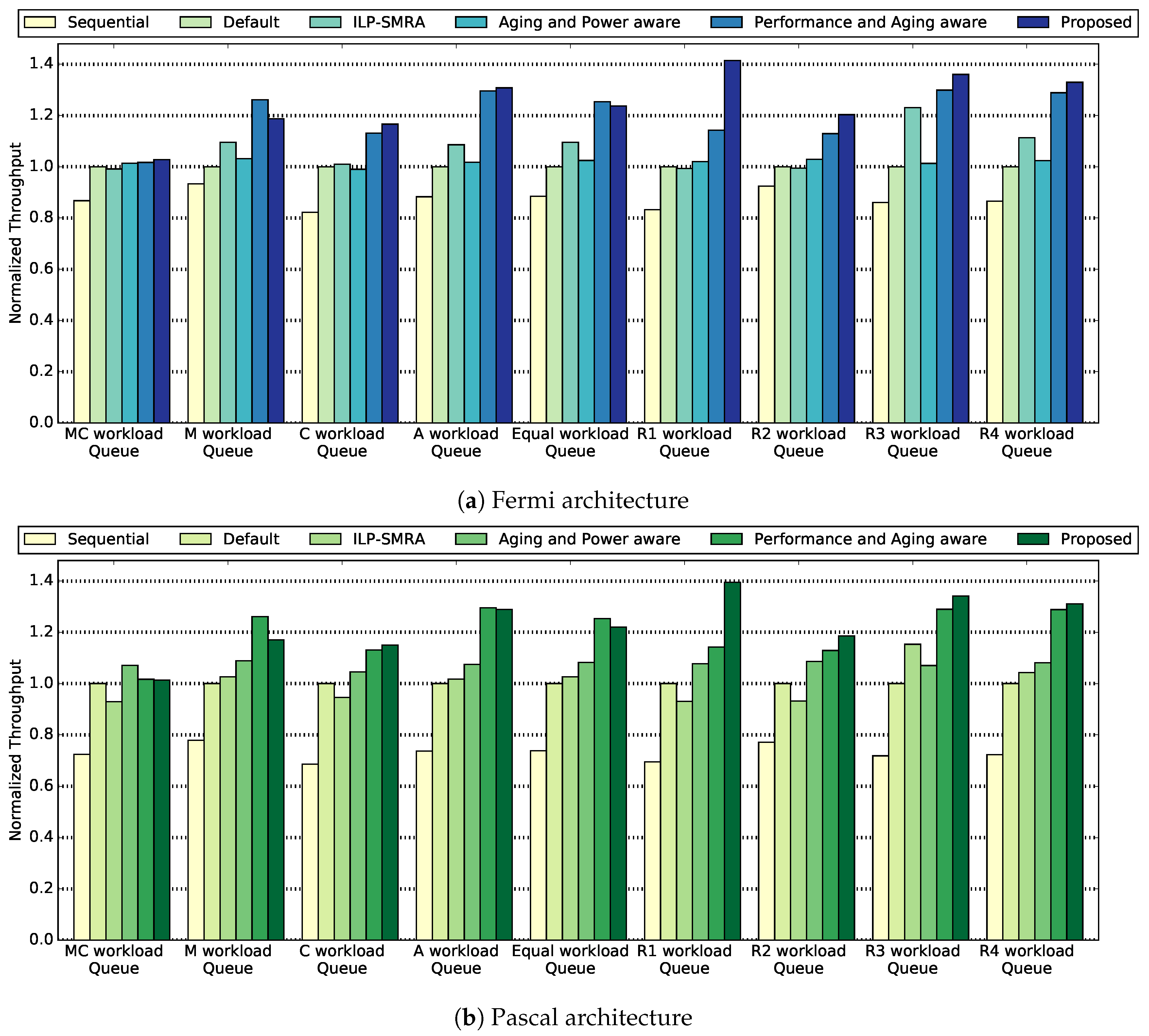

Electronics | Free Full-Text | Improving GPU Performance with a Power-Aware Streaming Multiprocessor Allocation Methodology | HTML

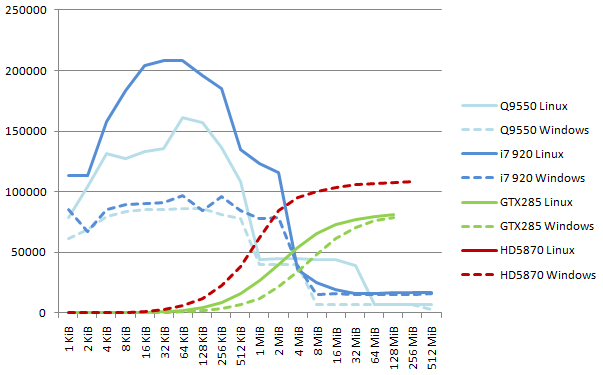

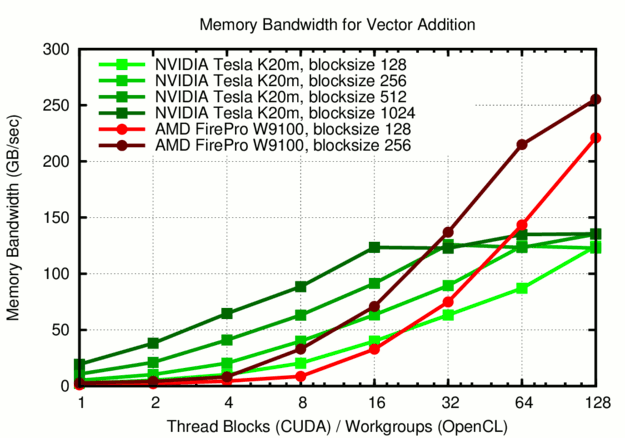

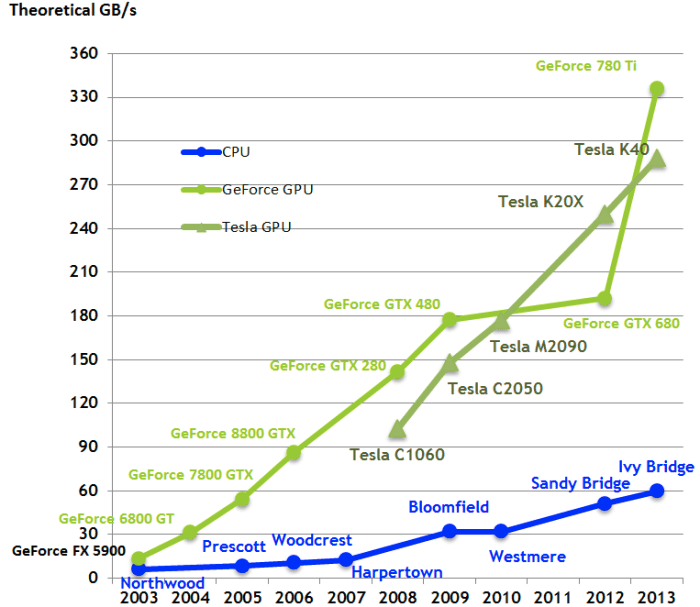

GPUs greatly outperform CPUs in both arithmetic throughput and memory... | Download Scientific Diagram

Throughput of the GPU-offloaded computation: short-range non-bonded... | Download Scientific Diagram

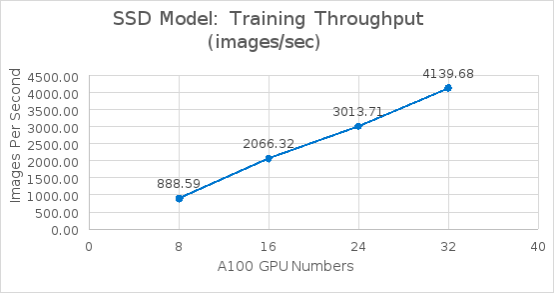

Test results and performance analysis | PowerScale Deep Learning Infrastructure with NVIDIA DGX A100 Systems for Autonomous Driving | Dell Technologies Info Hub

NVIDIA Ada Lovelace 'GeForce RTX 40' Gaming GPU Detailed: Double The ROPs, Huge L2 Cache & 50% More FP32 Units Than Ampere, 4th Gen Tensor & 3rd Gen RT Cores

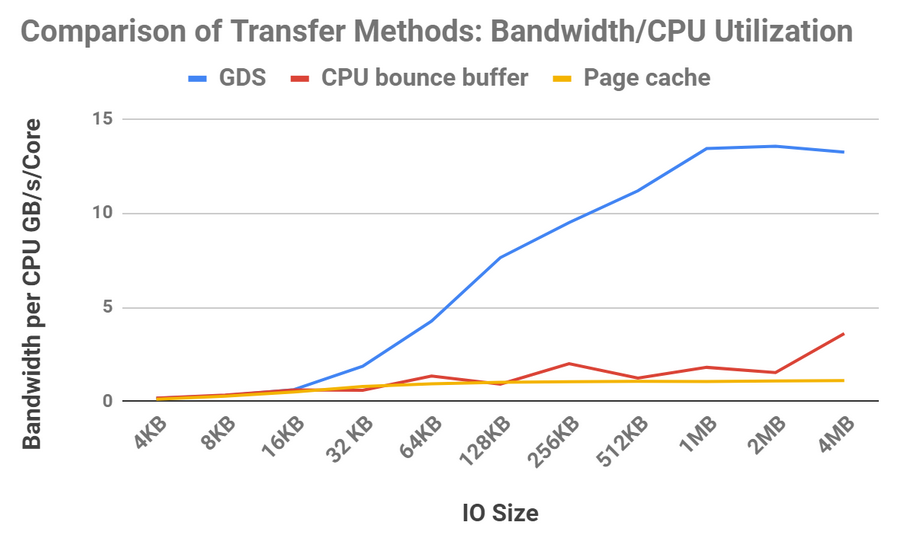

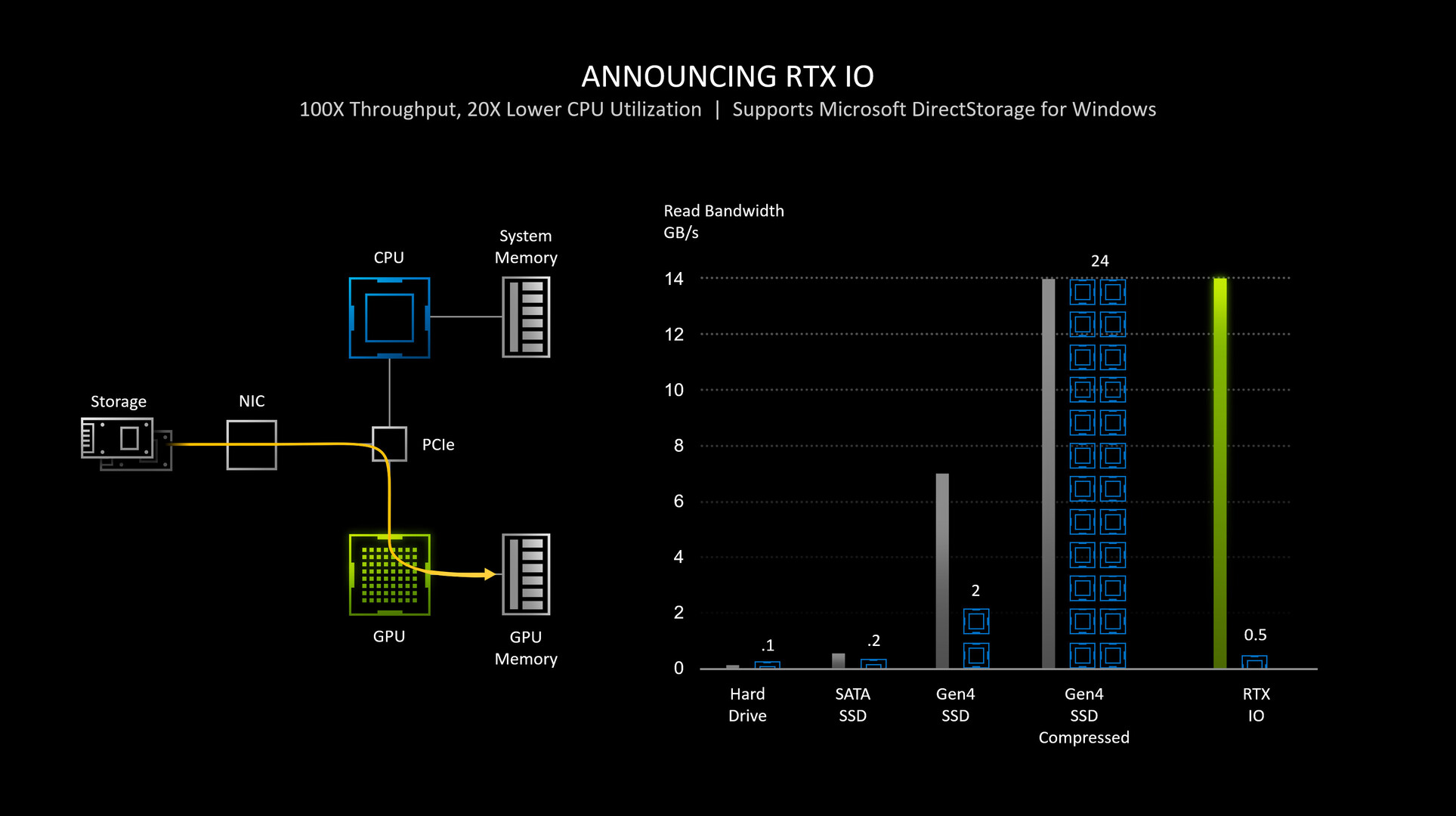

NVIDIA RTX IO Detailed: GPU-assisted Storage Stack Here to Stay Until CPU Core-counts Rise | TechPowerUp